student success

Can student persistence be predicted before students enroll in college?

As a statistician for Noel-Levitz, I spend a lot of time developing predictive models at the search stage. Predictive modeling uses enrollment data of current students to assess the enrollment probability of prospective students. Campuses can then use this model to estimate the likelihood of prospective students enrolling, and this can be done at various points in the funnel.

Noel-Levitz has a predictive modeling service for the search stage, SMART Approach, where campus historical outcomes are matched to survey response information from the National Research Center for College & University Admissions (NRCCUA). The resulting model allows campuses to purchase qualified NRCCUA names using a score based on each student’s likelihood of enrolling.

When discussing strategies for purchasing search names, the topic sometimes turns to the longer term concern for student persistence. I have been asked by colleagues and clients if it is possible to develop a SMART Approach model that can predict first-year to second-year student persistence. The question makes a lot of sense—more and more campuses are focusing resources on student retention, so why not use the predictive power of SMART Approach to purchase search names based on the likelihood to persist?

The challenge of this approach is that many of the characteristics that determine a student’s persistence decisions are determined after the search stage. Social integration, academic success, and financial ability are often not fully understood until the student has enrolled. In fact, this is why Noel-Levitz uses a separate predictive modeling tool, the Student Retention Predictor, to predict student persistence among currently enrolled students.

Still, I wanted to see the impact of purchasing search names with the longer-term focus of persistence. Eastern Kentucky University is a campus that uses both SMART Approach for search name selection and the Student Retention Predictor for identifying first-year enrollees who are at risk of not persisting to the second year. I would be able to test this strategy of purchasing names based on persistence scores and then test it against a student retention model.

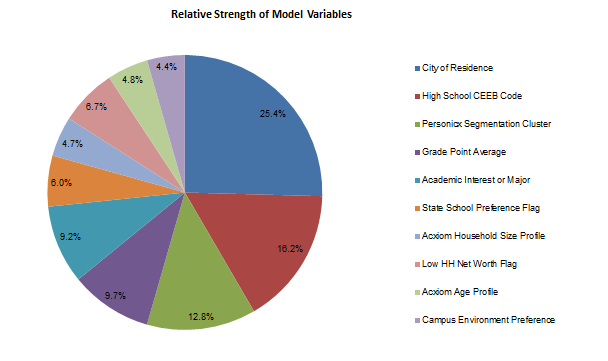

I developed a new SMART Approach model for Eastern Kentucky University using year-to-year persistence rather than enrollment as the outcome variable. The pie chart below shows the variables and their relative strength for the original SMART Approach model based on enrollment. This model had an AUC of 0.894, meaning that if we were to randomly select an enrollee and a non-enrollee from the modeling file, the probability that the enrollee has a higher model score than the non-enrollee is 0.894. (In general terms, that AUC means this is a very good model and is typical of the results we see with SMART Approach clients.)

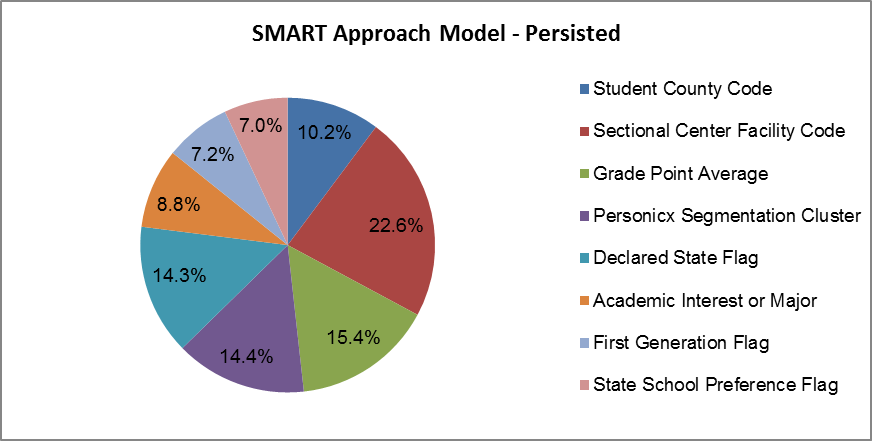

I then developed a second SMART Approach model where persistence was the dependent variable. The pie chart below shows the variables and their relative strength for the new model based on persistence. This model had an AUC of 0.896, nearly identical to the original enrollment model, and again validating that this was a strong model.

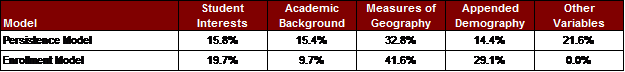

When comparing the two models, many of the variables were the same. However, there were some key differences in the type of information contained in each model. The table below outlines how the two models differed—these five categories reflect the general variables that predicted enrollment or persistence. The higher the percentage, the greater its influence.

You can see that the persistence model places more emphasis on academic background and less emphasis on geography and appended demography. The persistence model had the First-Generation Flag, meaning those students who are first-generation college students were less likely to persist. The Declared State Flag also entered the persistence model. This variable indicates that a student has indicated an interest in attending a school in the state where the institution is located.

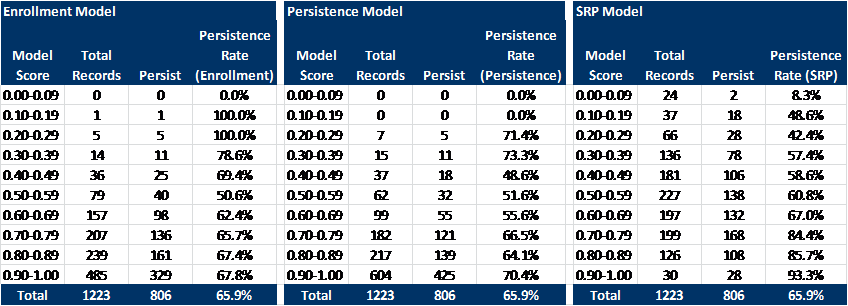

Once the new persistence model had been completed, I wanted to see how well both the enrollment and persistence models predicted first-to-second-year retention. Using the group of students who enrolled as first-time freshmen in the fall of 2010 and seeing who returned in the fall of 2011, I assigned predictive scores based on both the persistence model and the enrollment model. I could then compare these scores with the Student Retention Predictor model scores to see how pre-enrollment retention predictions compared to a model built after students had already enrolled.

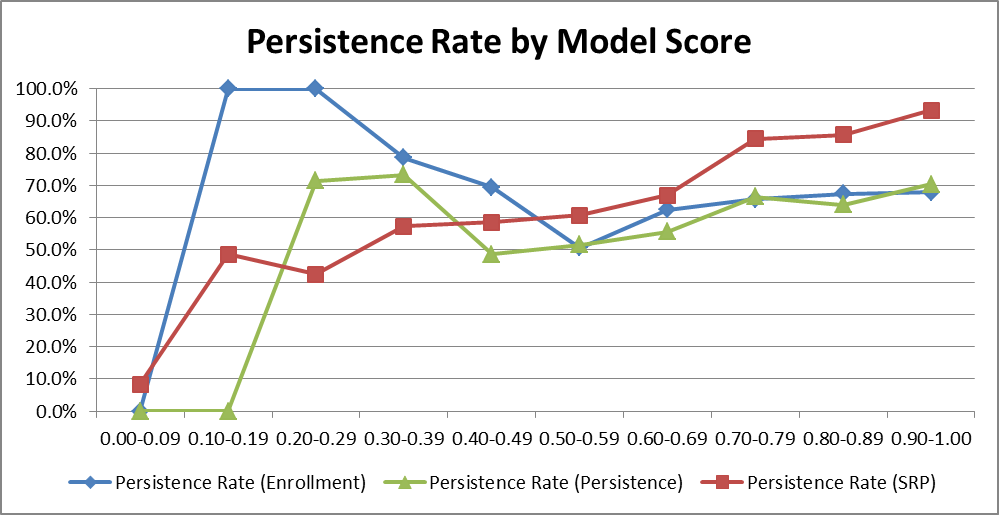

Noel-Levitz predictive models assign a model score to each student that ranges from 0.0 to 1.0. Lower scores indicate that the student is less likely to enroll/persist while high model scores indicate that the student is more likely to enroll/persist. “Total records” is the number of students in each score range, and “Persist” is the number of students who actually persisted. The “Persistence Rate” is the percentage of students who persisted from the total group. This rate should increase as the model score increases.

The following chart plots these results.

As you can see, the two SMART Approach models predicted actual student persistence at very similar rates and were nearly identical for model scores of 0.5 and above. However, as expected, both models did not perform as well as the Student Retention Predictor model, which predicted persistence using characteristics at the enroll stage. These results imply that the characteristics that are available at the search stage are well suited to predicting initial student enrollment, but are not adequate to differentiate between students who will enroll and those who will persist to the second year.

What does this mean for campuses? One case study is not definitive, but it is not surprising that the dedicated retention model built on post-enrollment data outperformed models built on pre-enrollment data. It illustrates that there are enough differences and variables in prospective and enrolled student behavior that they warrant separate modeling. It also goes to show that there are no shortcuts to student retention modeling and that predicting student retention requires the same dedication and resources as predicting initial student enrollment.

What about your campus? Have you tried to model enrollment or persistence behavior, or have questions about how to do this? Send me any questions by e-mail and I can share my experience and insights with you on this very intriguing process.