artificial intelligence (ai)

Artificial Intelligence for Higher Education: What It Is and What to Look Out For

This blog includes contributions from my RNL colleagues David Palmer, VP of Artificial Intelligence Technology Strategy, and Solomon Grey, Director Program Management.

Higher education is in the middle of an AI transformation. As advances in AI happen more rapidly, the impact of artificial intelligence on how we analyze data, recruit prospective students, serve current students, engage alumni, and work on campus advances so quickly, it can be difficult to keep up. In fact, while higher ed has been abuzz about AI in recent months, many campus professionals are unsure how AI works, how it helps, and how to use it responsibly.

RNL addressed these concerns in our webinar AI 101: Unveiling the Basics of AI University Innovation. My colleagues dove into detail about different AI models and the limits and risks of AI. We also have launched RNL Edge, our suite of AI solutions designed specifically for higher education. These solutions help you engage students and alumni, talk to your CRM to get instant insights, and work more efficiently than ever.

What is artificial intelligence for higher education?

Part of the confusion of understanding artificial intelligence for higher education is that there are many ways to experience AI. In fact, we all experience AI daily. If you have used Alexa or Siri on your mobile devices, you have used AI. The recommendations we receive from YouTube or Netflix are driven by AI. And of course, if you have used ChatGPT, that’s also AI.

What is AI exactly? It’s the development of computer systems capable of performing tasks that typically require human intelligence. AI tries to mimic human intelligence in a machine. While there has been a lot of discussion about whether AI can really “think,” it cannot. Instead, it is a simulation of human intelligence and problem-solving capabilities.

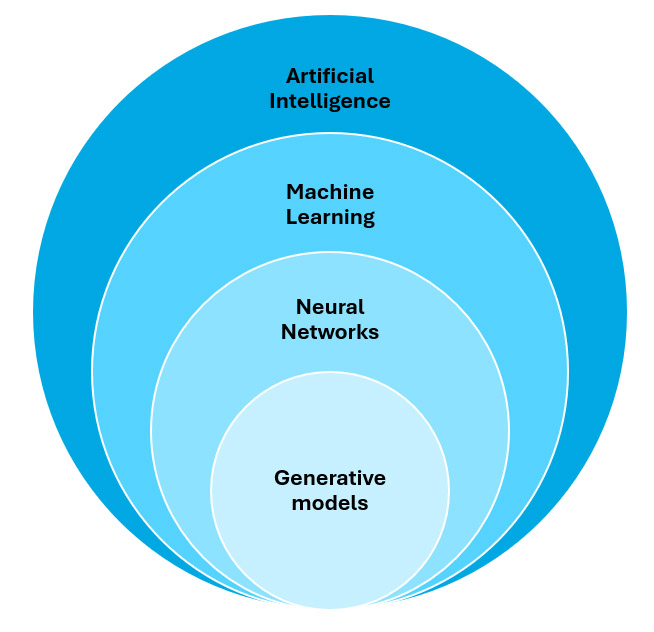

There are related terms and subfields of AI. Machine learning gives computers the ability to learn by combining data with algorithms to train a model that can make decisions without being programmed to do so. Neural networks are a specific kind of machine learning model inspired by the human brain, and they can recognize patterns and make sense of complex data. Generative AI is also a subset of machine learning, using information captured in a neural network to create new data based on what it has learned, such as texts, images and audio.

Generative AI is the major AI advance that generated the greatest buzz in the last year, especially because of the popularity of ChatGPT. These are digital assistants or bots that use Large Language Models (LLMs). LLMs are a type of generative AI that excels in understanding and generating human language, or natural language. This capability enables users to interact with AI conversationally in the form of digital assistants and chatbots. They allow you to interact with AI using natural language and then receive answers back to your questions and requests in natural language.

Open-source LLMs vs Proprietary LLMs aka public vs protected

Open-source LLMs are models whose code and training methods are publicly available, offering greater flexibility and customization. Unlike proprietary models, open-source LLMs are not controlled by a single entity; instead, they can be modified and improved by any user, which enables innovation and adaptation. This openness allows users to train the model on specific data sets tailored to their interests, such as using information from their own CRM to better reflect the specifics of their institution, rather than relying soley on knowledge based on publicly available data from the Internet.

Proprietary LLMs keep their technology, data, and research private. A well-known example is OpenAI’s ChatGPT, which is trained on a broad range of texts from the Internet. While this type of model offers several benefits, it also has drawbacks related to accuracy, bias and reliability, which will be discussed shortly. Most importantly for higher education, the information used in these systems is not private, raising concerns including data security and governance.

Discover RNL Edge, the AI solution for higher education

RNL Edge is a comprehensive suite of higher education AI solutions that will help you engage constituents, optimize operations, and analyze data instantly—all in a highly secure environment that keeps your institutional data safe. With limitless uses for enrollment and fundraising, RNL Edge is truly the AI solution built for the entire campus.

What are the limits and risks of AI?

Understanding how AI works also helps us understand the limits and risks of artificial intelligence for higher education. The thing to remember is that conversational AI does not know it’s having a conversation with you. It is relying on how it is trained and built to give you responses. That makes it very important to know how the AI tools you use are trained and what guardrails are in place, because there are limits and risks with AI that can (and have) caused real issues for organizations.

Unwanted behavior: These can range from inappropriate or irrelevant prompts from users (such as asking a chatbot for help with a homework problem) to inappropriate responses from the AI (sharing how to cheat on homework, for example). It also can also include privacy violations such as accepting or offering sensitive information like student records. You want to make sure that all the responses your AI tools provide are appropriate to the information that should be provided and appropriate to the voice of your institution.

Bias: Because many AI tools are trained on vast quantities of data that are already out in the world, bias can creep into the models. Those biases can then be propagated through all of your results from that AI. This can lead to skewed results, biases in data, biases introduced by user input, feedback loop biases, and a number of other pitfalls. You need to be able to trust how your AI tools are being trained and understand how biases are being addressed. Also, addressing bias is an ongoing process to ensure bias does not creep into models that already exist.

Accuracy: This is one of the more well-known risks as there has been media coverage of AI-generated pictures of people with too many fingers and high-profile cases of AI providing inaccurate information. Remember that AI has no inherent ability to know when it is telling you the truth, so it will produce results that it predicts are the most likely response according to its existing algorithms. These inaccuracies can be in terms of factual accuracy as well as accuracy of voice. Does your AI represent your mission and your voice accurately?

Security: As I already mentioned in the Open vs Closed system discussion, security is a key consideration when using AI tools. The simple rule of thumb is to use “generic” AI tools for generic purposes, but private AI tools when handling sensitive information. For instance, when summarizing a student transcript, that should absolutely be used on a closed AI system that is private and secure.

Regulation: Finally, when you are using AI tools, you want to know that the creators of those tools are keeping in compliance with current regulations as well as future regulations.

You can also read more about responsible AI for higher education to explore AI governance.

Evaluating AI solutions for higher education

Now that we understand how AI works and what risks you need to mitigate, how do you choose an AI solution for your institution? There are several things you need to consider:

- Do the solutions follow responsible AI principles? This is a foundational question because responsible AI guides your entire approach to implementing and managing AI. This is the first question every institution should ask.

- Are the solutions secure? To get the most out of AI, you will want to have your AI solutions handle information such as sensitive student and institutional information.

- Are the solutions free of bias and inaccuracy? This relates to responsible AI and security. Do your AI solutions provide factual information in a voice that aligns with your institution? Make sure you understand how the tools are trained so you can make a proper assessment about bias and inaccuracy.

We kept all of these factors and many others in mind when we developed the RNL Edge AI suite of solutions. Our solutions are secure, private, and rigorously trained, and developed specifically for higher education uses:

- RNL Compass is our digital assistant tool that seamlessly integrates with our CRM to provide 24/7 engagement to potential students, parents, and alumni. It can help answer questions about admissions, financial aid, fundraising, and more—accurately and in alignment with your voice.

- RNL Insights allows you to have a conversation with your CRM, applying the massive benefits of an LLM to your own data. You can effortlessly unlock strategic insights from your data without having to wait for reports or navigate multiple dashboards.

- RNL Answers is your internal chat tool to get things done at your institution, whether you need a concise summary of a donor record, a detailed analysis of ROI from your latest eighth-day enrollment report, or assistance in crafting personalized communications. It’s built on a secure server that ensures privacy.